0:00 / 1:00

News

GPT-5.5 Is OpenAI's Strongest Agentic Coding Model - Codex Gets the Upgrade Today

calendar_today Date:

schedule Duration: 1:00

visibility Views: 489

database

Summary Report

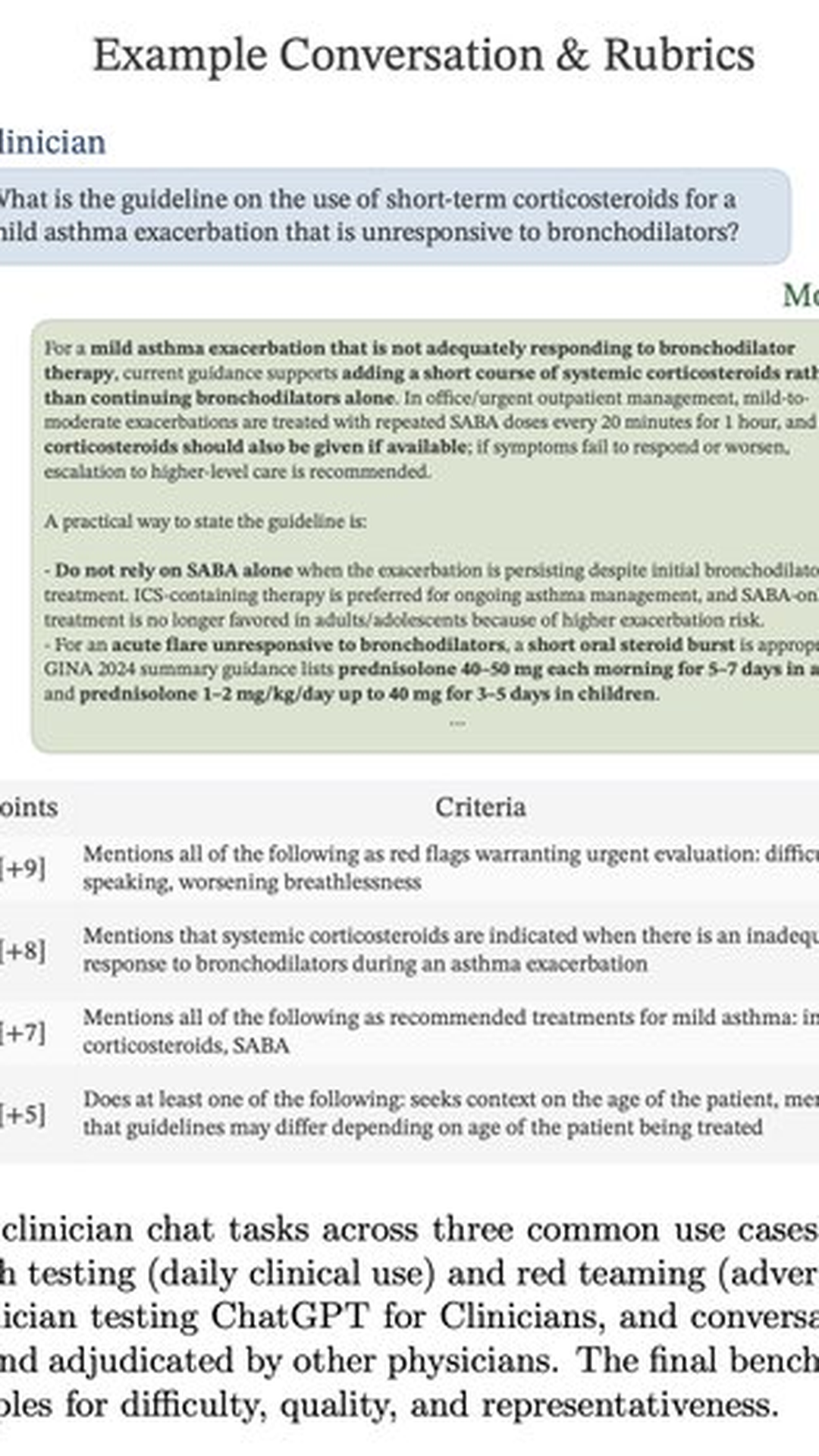

OpenAI has released GPT-5.5, its strongest agentic coding model yet, hitting 82.7 percent on Terminal-Bench 2.0 and 58.6 percent on SWE-Bench Pro while matching GPT-5.4's latency.

- 01. GPT-5.5 is OpenAI's new strongest agentic coding model, succeeding GPT-5.4.

- 02. Scores 82.7 percent on Terminal-Bench 2.0 for command-line workflows.

- 03. Hits 58.6 percent on SWE-Bench Pro for real GitHub issue resolution.

- 04. Integrated into Codex for end-to-end codebase understanding, changes, debugging, and validation.

- 05. Matches GPT-5.4 per-token latency while using fewer tokens per task.

OpenAI has released GPT-5.5, positioning it as the company's most capable agentic coding model to date. The model demonstrates significant improvements in automated programming tasks, scoring 82.7% on Terminal-Bench 2.0, a benchmark specifically designed to evaluate performance on long-running command-line operations.

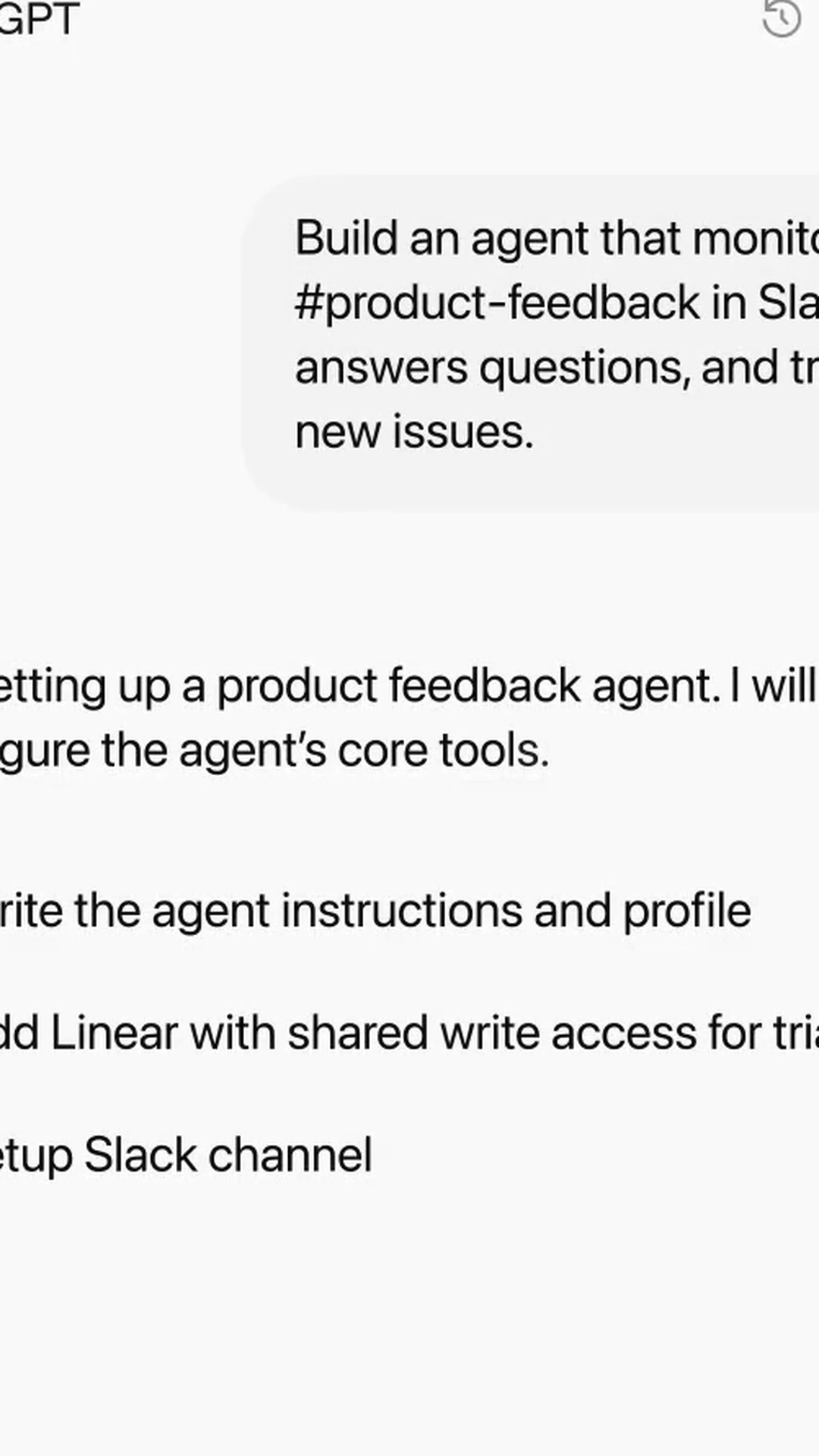

The new model represents a clear evolution from GPT-5.4, with enhanced end-to-end coding capabilities integrated into OpenAI's Codex platform. GPT-5.5 can autonomously read existing codebases, implement necessary changes, debug issues, run tests, and validate results within a single operational cycle. This streamlined approach reduces the need for human intervention across the development pipeline.

On SWE-Bench Pro, which measures real-world GitHub issue resolution capabilities, GPT-5.5 achieved a 58.6% success rate. While this represents a modest improvement over GPT-5.4's 57.7% score, OpenAI claims the new model reaches this performance level whilst consuming fewer tokens, indicating improved efficiency.

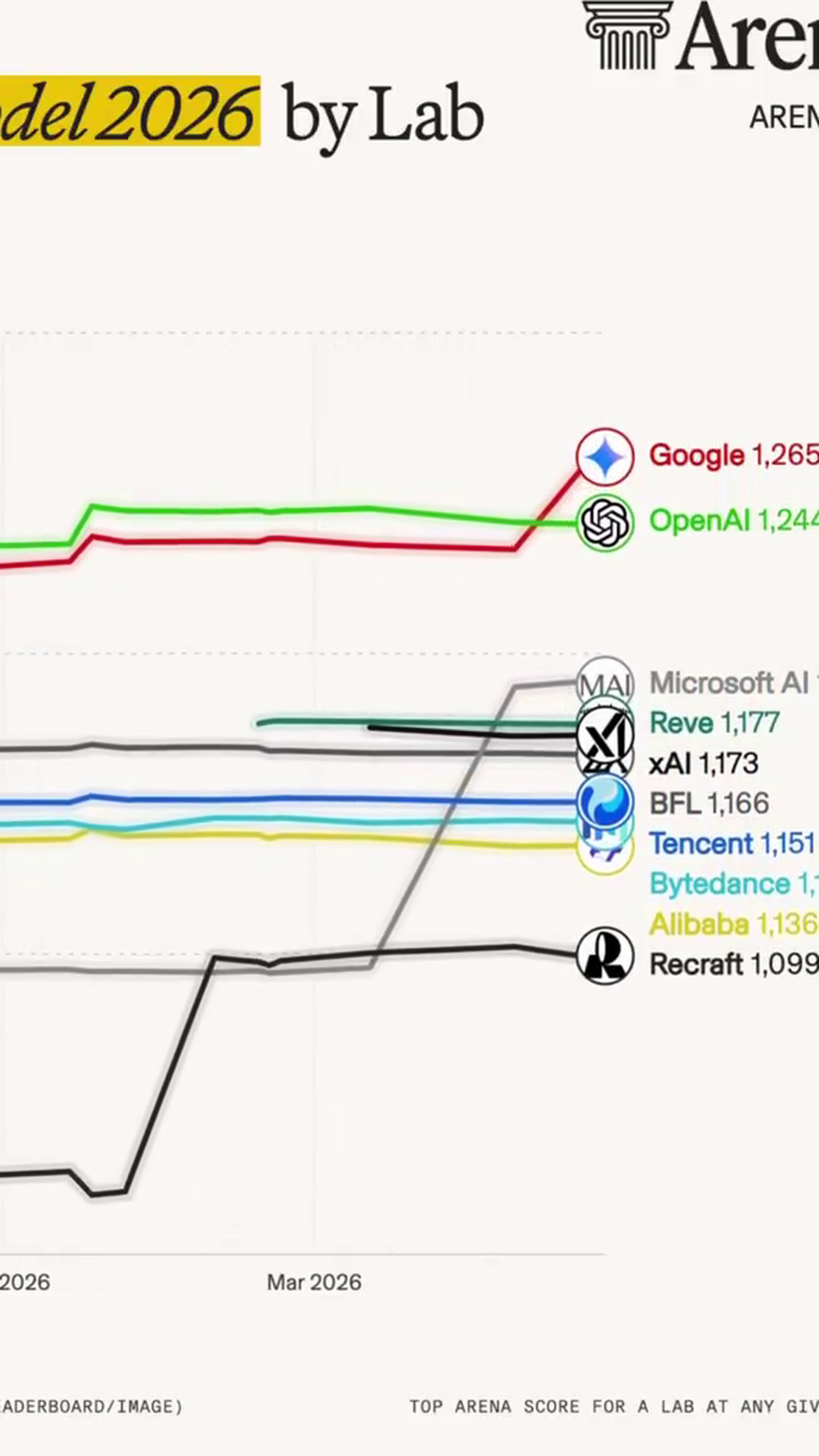

Perhaps most notably, OpenAI asserts that GPT-5.5 maintains equivalent per-token latency to its predecessor in production environments. This claim challenges the typical trade-off between model capability and response speed, suggesting meaningful optimisations in the underlying architecture. The development signals continued progress in the competitive landscape of AI-powered coding tools, where the focus remains on extending autonomous operation periods without sacrificing performance.

Meta Data

Company:

LLM: