0:00 / 0:58

News

Claude Mythos Hits 16-Hour Time Horizon - METR Says It Has Saturated Their Benchmark

calendar_today Date:

schedule Duration: 0:58

visibility Views: 83

database

Summary Report

METR's evaluation of Claude Mythos Preview puts its 50%-time-horizon at 16+ hours, the upper end of what their task suite can measure. The doubling time across frontier models is now around 105 days.

- 01. Claude Mythos Preview reached a 50%-time-horizon of at least 16 hours on METR's software benchmark.

- 02. The 95% confidence interval spans 8.5 to 55 hours - only five of METR's 228 tasks exceed 16 hours.

- 03. METR ran the evaluation in March 2026 ahead of Anthropic's deployment decisions.

- 04. For comparison, Opus 4.6 and GPT-5.2 sat around 5-6 hours; Sonnet 3.7 was closer to 2; GPT-4o was about 7 minutes.

- 05. The doubling time across frontier models is around 105 days - over 1,000% growth per year.

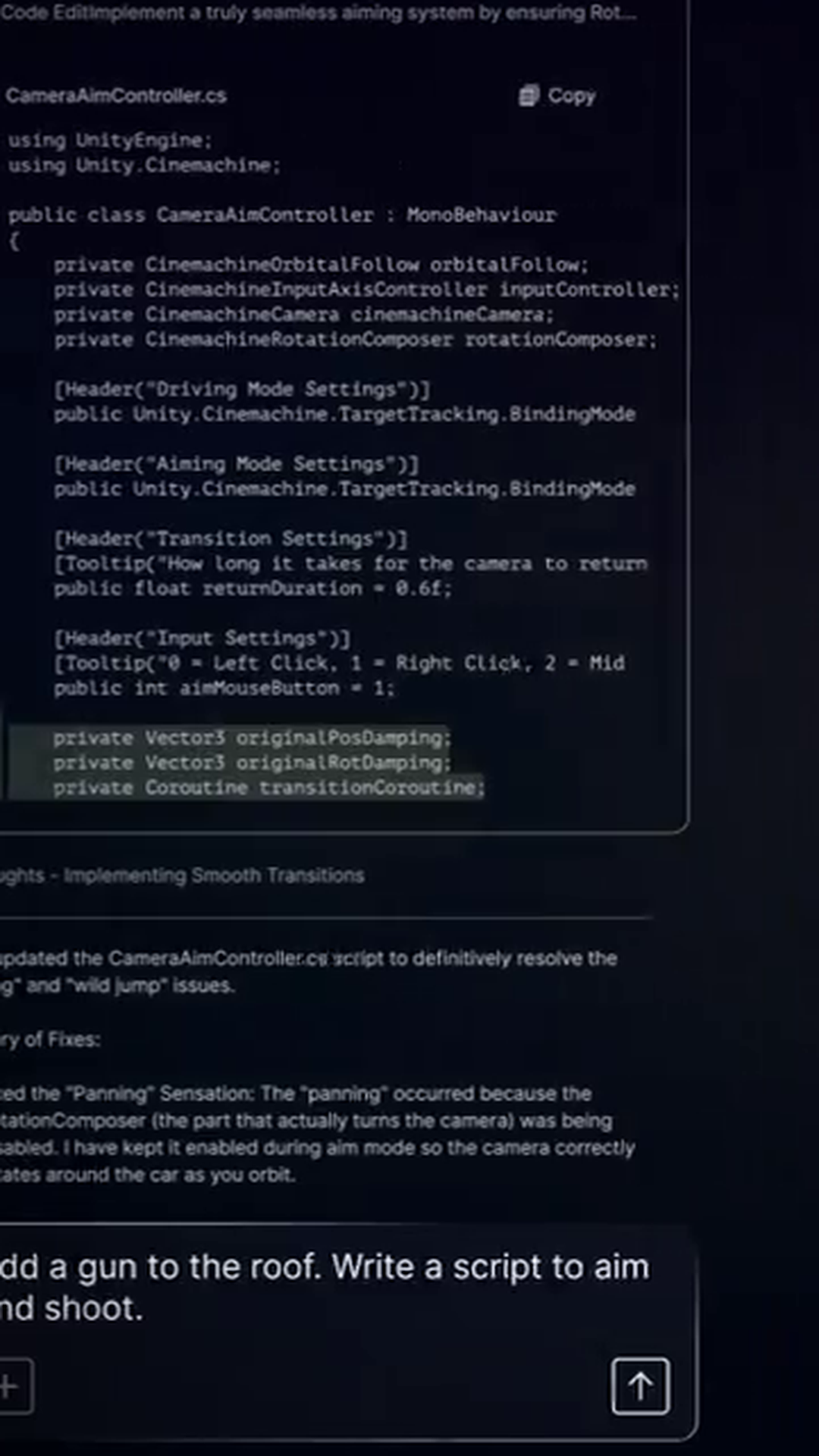

METR's latest evaluation of Claude Mythos Preview reveals a significant jump in AI capability, with the model achieving a 50% success rate on tasks that take human experts at least 16 hours to complete. This represents the upper limit of what METR can currently measure on their software benchmark, effectively saturating their evaluation infrastructure.

The time horizon metric measures how long a task takes a human expert at the point where AI still succeeds half the time. METR conducted this assessment in March 2026, prior to Anthropic's deployment decisions. The 95% confidence interval spans 8.5 to 55 hours, though this range is wide due to only five tasks in METR's 228-task suite exceeding the 16-hour threshold.

The performance leap becomes stark when compared to previous models. Opus 4.6 and GPT-5.2 achieved time horizons of 5-6 hours, whilst Sonnet 3.7 managed around 2 hours. GPT-4o, evaluated in mid-2024, only reached approximately 7 minutes. This progression shows frontier models doubling in capability roughly every 105 days, translating to over 1,000% growth annually.

METR cautions against using these figures for precise model comparisons, noting that measurement infrastructure is struggling to keep pace with rapid AI advancement. New evaluation tasks are in development to better assess these increasingly capable systems, but for now, Mythos has essentially reached the ceiling of current benchmarking capabilities.