0:00 / 1:13

Sources

News

SubQ Debuts With 12M Tokens and a New Attention Architecture - 52x Faster Than FlashAttention

calendar_today Date:

schedule Duration: 1:13

visibility Views: 90

database

Summary Report

Subquadratic launched SubQ, the first frontier LLM built on sub-quadratic sparse attention. It ships with a 12M-token context, claims 52x speed-up over FlashAttention, and under 5% the cost of Opus.

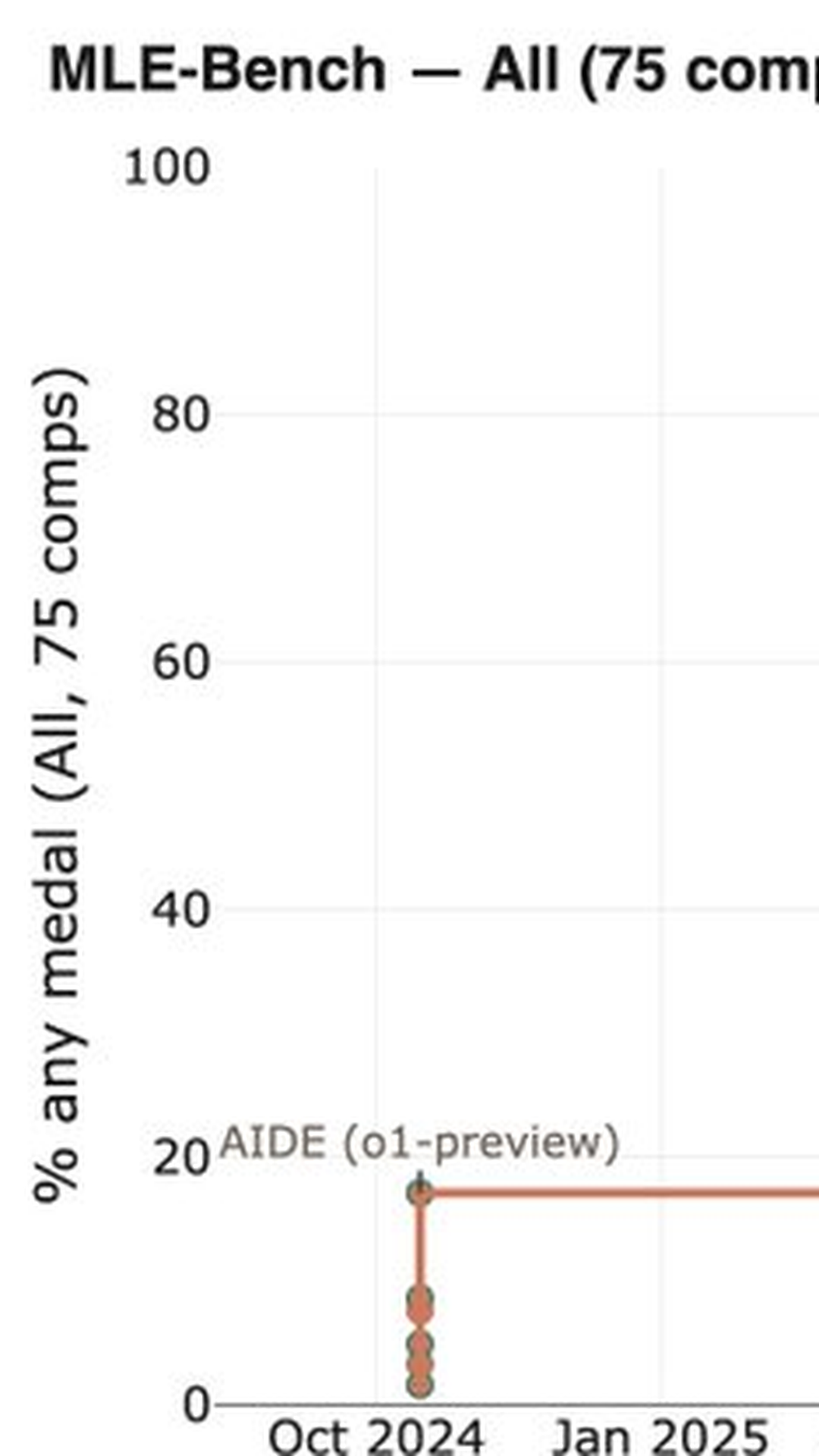

- 01. Subquadratic emerged from stealth with $29M in seed funding and SubQ, a frontier model built on sub-quadratic sparse attention rather than standard transformer attention.

- 02. SubQ ships with a 12-million-token context window, the largest of any frontier model to date.

- 03. The company claims SubQ runs 52x faster than FlashAttention at 1 million tokens and at under 5% the cost of Anthropic's Opus.

- 04. At the full 12M context window, Subquadratic claims nearly 1,000x less compute than rival frontier models.

- 05. All benchmarks are self-reported and have not yet been independently verified.

Subquadratic has emerged from stealth mode with £29 million in seed funding and claims to have developed the most significant architectural breakthrough since the original transformer paper. Their new model, SubQ, abandons standard attention mechanisms entirely in favour of what they term "sub-quadratic sparse attention" (SSA), whilst offering an unprecedented 12-million-token context window.

The company's performance claims are striking. SubQ allegedly operates 52 times faster than FlashAttention when processing one million tokens and costs under 5% of Anthropic's Opus model to run. At its maximum 12-million-token context length, Subquadratic claims the model requires nearly 1,000 times less compute than competing frontier models.

The core innovation lies in addressing what Subquadratic identifies as a fundamental inefficiency in transformer architecture. Traditional transformers compute attention scores for every possible token pair, regardless of relevance. SSA attempts to learn which token relationships actually matter and skips the computational overhead of irrelevant pairs, potentially solving the quadratic scaling problem that has limited context lengths in large language models.

However, significant caveats remain. All performance benchmarks are self-reported by Subquadratic, and the broader AI research community has yet to independently verify these claims. Until peer review and external validation occur, the extraordinary assertions remain unproven, though potentially transformative for the field if substantiated.

Meta Data

Company:

Model: